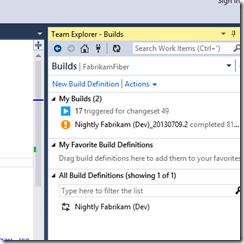

This weekend I had to work on the upgrade of the main Team Foundation Server we have in production. Everything went fine – despite not everything according to the planned slick idea we had. The whole thing has been longer than just yesterday, though, and it comes with several takeaways.

Here’s what, of course involving our shiny Team Foundation Server 2013. Keep in mind the scenario: thousand of users all around the world, terabytes of databases. Here we go:

- Plan, but do not overplan.

We had a plan but you cannot cover anything. You cannot plan every single step and do not have a B, C, D plan. I am not talking about not having a plan, but I suggest of being confident and flexible.

Start planning with enough time, so the right guys are involved, and keep in mind the basics of TFS and its known issues, the history of the server (in our case it was essential) and use the TFS Upgrade Guide to have a guideline to follow. - Test the core, test the basic.

This TFS survived through upgrades from 2005 on, we discovered what we thought impossible. Your team’s skills will let you fix the problems you might find.

So test the core, verifying that all the essential pillars are working. If you find out issues, try to fix them or plan a deferral (maybe with the CSS?) if they are not critical.

Do not start trying the latest, better tech combo. As a sample, do not start upgrading the test server to 2013 and upgrade the build servers as well. Test the existing servers (or clones of them) against the 2013 server, and only when you are sure of the result test the upgrade of them.

File as much documentation as you can but – again – you cannot cover the 100% of cases. - Problems are problems if they come out just in production. Otherwise they are issues to fix.

You might find something to fix at a later time, which does not impact all the users but maybe a small percentage. You can work it out later.

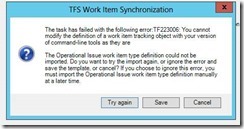

Instead, for instance, we hit a very nasty problem during our live production update. We had no plan for it, as it did not happen in the test. What did we do? We tried several solution (one worked, luckily) while someone else worked on a mitigation plan to use in case no solution was fine.

We did not have to use it, but if you are running a service, you have to make tradeoffs and stop the whole service for just a part of it is unacceptable.

Remember that Team Foundation Server is modular, so you can exclude a part of it in case of problems, and that usually the upgrade compatibility for who comes from the last version (2012 in our case) is almost effortless.